Direct Answer: A useful ICP score is an auditable composite of a small number of fit attributes, weighted against your actual closed-won distribution — not a hand-tuned belief system. If a rep can't explain why an account scored an 84 and the score doesn't track historical win rate, the model is decoration. Calibrate, document, and recompute quarterly.

ICP Scoring: The Short Answer

- Inputs are auditable. Every attribute has a definition, source, and refresh cadence.

- Scale is calibrated against closed-won, not invented or copied from a vendor template.

- Reps can explain any score in one sentence using the contributing fields.

- The model is recomputed at least quarterly so the scale doesn't silently drift.

Common Misconceptions About ICP Scoring

Three patterns explain most failed scoring rollouts:

- "More attributes = better score." A 28-feature model with a rationalized weighting matrix typically beats a 7-feature model only on paper. In production, sparse fields dominate the variance and reps stop trusting the output the first time it surfaces a wildly off-fit account.

- "AI/ML will figure out the weights." Modeled weights are only as honest as the closed-won label they trained against. If your CRM marks "closed-won" inconsistently, the model learns the inconsistency.

- "The score is the decision." A score is one input. Treat it as a queue ranker with explicit override rules, not as a gating threshold that quietly suppresses accounts a rep should still see.

What Actually Makes One ICP Score Better Than Another?

Five qualities, in priority order:

- Calibration against closed-won. A score of 80 should correspond to a known historical win rate band. Without that mapping the number is opinion in a numeric disguise.

- A small set of high-coverage attributes. Five to eight fields that are present on >90% of records beat twenty fields that are sparse. Sparsity creates phantom precision.

- Per-attribute provenance. Every input lists its source (CRM field, enrichment vendor, derived rule) and last-refreshed date.

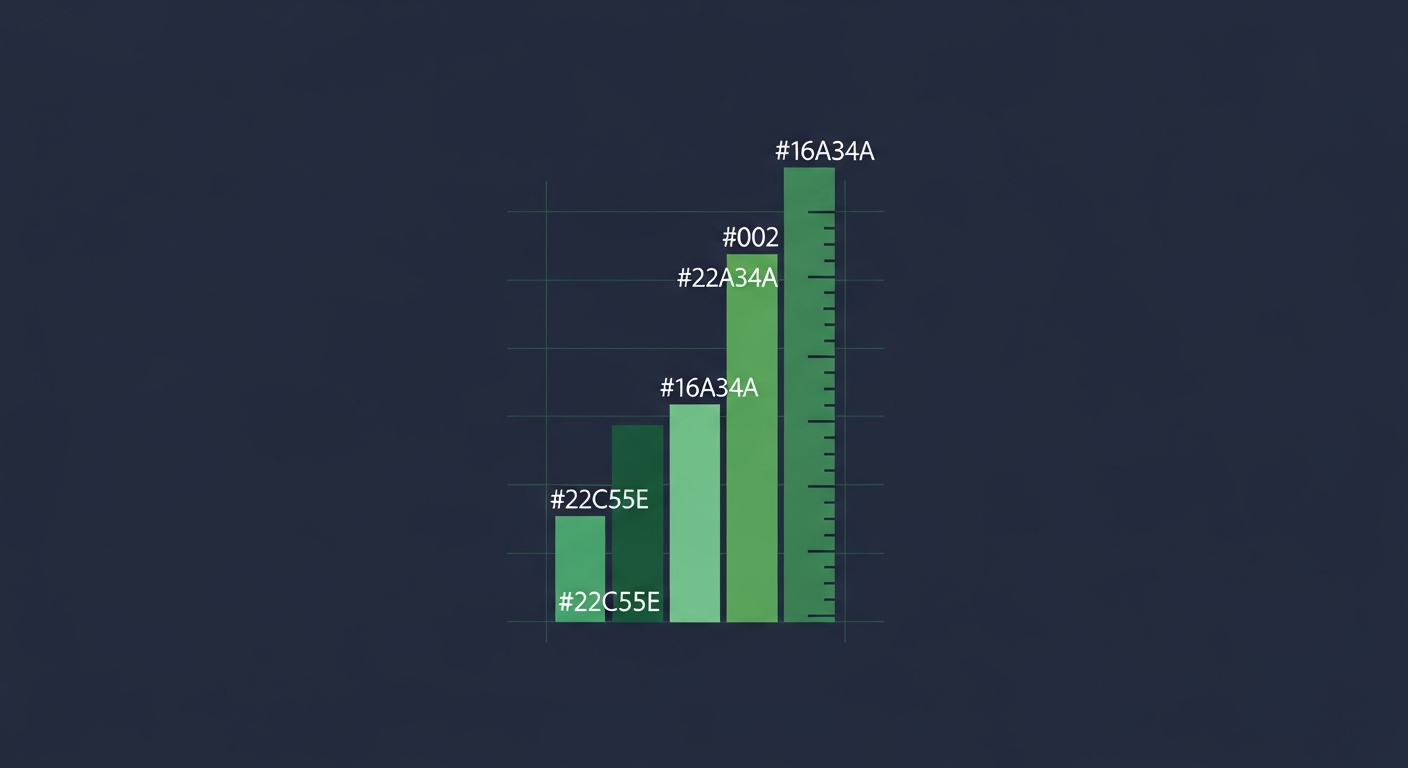

- Tier definitions, not just numbers. A1 / A2 / B1 / B2 with prose definitions reps can read in five seconds outperforms a raw 0–100 score that looks scientific but resists interpretation.

- A documented retraining cadence. "Recomputed each quarter from the last 8 quarters of closed-won" is operational. "Refreshed when marketing requests" is not.

What to Check Before You Roll Out an ICP Score

Before pushing a score into pipeline reviews:

- Confirm the closed-won label is consistent across business units. If sales calls a stalled deal "closed-won" to hit quota, the model inherits the lie.

- Backtest the score on the previous four quarters of closed-won before forward-deploying. If accounts that closed scored low, fix the model first.

- Publish the model card. One page: features, weights (or rules), training window, last refresh date, owner.

- Make the score explainable per-account. A rep should see "score 84 driven by industry, headcount band, and tech stack match."

- Decide what overrides the score and log every override. Override patterns are the highest-signal training data you have.

- Cap how often the score can change for an account. A score that flips weekly because of vendor refresh noise destroys trust faster than a wrong score that stays stable.

Comparison: scoring approaches that survive in production

| Dimension | Hand-tuned weights | Black-box ML model | Rule-based tiers + calibrated composite |

|---|---|---|---|

| Reproducibility | High | Medium | High |

| Explainability | High | Low | High |

| Adaptability to new segments | Low | High | Medium |

| Ease of audit | High | Low | High |

| Calibration discipline required | Low | High | Medium |

| Best for | Small, stable ICP | Large, fast-moving ICP | Most B2B revenue teams |

| Risk of silent drift | Low | High | Low if recomputed quarterly |

Frequently Asked Questions

What is ICP scoring?

ICP scoring is a per-account number or tier that estimates how closely an account matches your ideal customer profile. It is used to rank outbound queues, route inbound leads, and prioritize ABM investment. A useful score is calibrated against your historical closed-won distribution, not invented from belief.

How do you build an ICP scoring model in B2B?

Start by writing the prose definition of your ICP, then pick five to eight high-coverage attributes that operationalize it. Score each attribute, weight them against historical closed-won data, and bin the composite into named tiers (A1, A2, B1, B2). Backtest, publish a model card, and recompute quarterly.

How many attributes should an ICP score include?

Five to eight is the sweet spot for most B2B teams. More attributes sounds rigorous but in practice most are sparse, which makes the score unstable and the explanations brittle. Coverage above 90% per attribute is more important than total attribute count.

Should ICP score be a number or a tier?

Both, with the tier as the primary surface for reps. A 0–100 score is useful for sorting and analytics; a labeled tier (A1, A2, B1, B2) is what reps interpret in seconds during pipeline review. Showing only the number is a common cause of low adoption.

How often should an ICP score be recomputed?

At least quarterly, against the trailing six to eight quarters of closed-won data. Faster than monthly and the score becomes noisy; slower than annually and the scale silently drifts as the market and your sales motion change.

How do I get sales to trust the ICP score?

Three things, in order: make every score explainable in one sentence, backtest it on the last four quarters of closed-won and share the result, and log every rep override so the model learns from disagreement instead of being undermined by it.

Is "fit" the same as "intent"?

No. ICP fit is a stable attribute of an account (industry, size, tech). Intent is a transient signal that the account is researching or hiring around a problem. A useful prioritization model multiplies the two — high-fit + high-intent is the working queue for today.

What's the most common ICP scoring failure?

Sparse fields dominating the score. The model "rewards" accounts where your enrichment vendor happens to have data and "punishes" accounts where it doesn't, regardless of fit. Audit attribute coverage before weighting and exclude any field with under 90% coverage from the composite.

References

- HBR, The Science of Sales: https://hbr.org/topic/sales

- Gartner, B2B Buying Journey research: https://www.gartner.com/en/sales/insights/b2b-buying-journey

- Forrester, B2B Marketing & Sales research: https://www.forrester.com/research/

- ICO (UK), Direct marketing guidance: https://ico.org.uk/for-organisations/direct-marketing-and-privacy-and-electronic-communications/

Next Steps

If you'd like to skip the manual feature-engineering pass and see calibrated tiers running against verified contacts and live signals, compare your current sourcing cost per qualified meeting against the transparent monthly pricing for TheLeadSeeker. The trial gives you 14 days to backtest the tier mapping on your own closed-won data.